The Closed-Timelike-Curve Problem in Software

Simulating Deutsch's CTC consistency condition on classical hardware

In 1991, David Deutsch published a paper on quantum mechanics and closed timelike curves. The question at its centre was gloriously awkward: if a particle goes back in time and interacts with its earlier self, what happens when the two disagree?

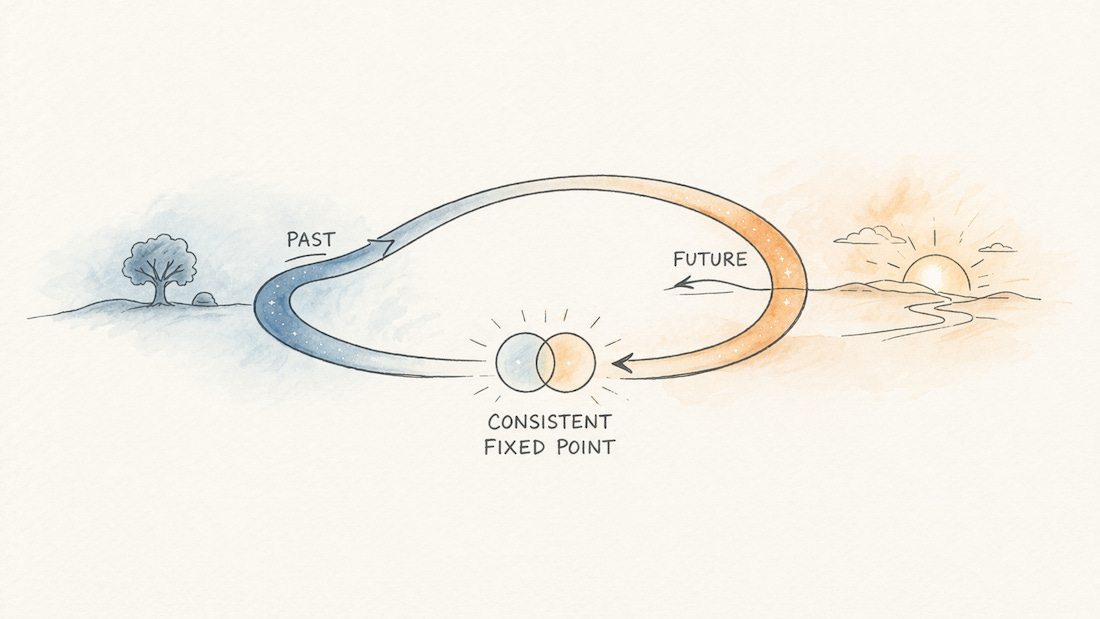

Deutsch’s answer was not that the universe explodes, or that time travel is therefore impossible. His answer was stranger, and much more interesting. The system settles. Past and future exchange information until they arrive at a state that is self-consistent across the loop. If no single state can satisfy both ends, then what remains may be a mixture: several states, each individually consistent, held together at once.

This sounds like physics behaving badly on purpose. But it also turns out to be a surprisingly good description of a problem software has been quietly running into for years.

What should a program do when a value depends on itself? When the output of a calculation is also one of its inputs? When two parts of a system need to agree, but neither can finish until the other has already done so?

Most libraries solve this by sweeping the loop out of sight. A few confront it directly. And one or two get surprisingly close to Deutsch’s original answer: let the loop run, see what stabilises, and if the answer turns out to be plural, report that honestly rather than filing a complaint.

What is striking is not just that these systems solve similar problems. It is that they each embody a different attitude towards paradox. Some are strict. Some are elegant. Some are probabilistic. Some are effectively saying, “please do not make the timeline weird in the first place.”

And then there are the ones willing to let it get weird and see what survives.

Mariposa: Time Travel With a Stern Referee

Mariposa is the most literal system in this landscape. It gives you explicit time-travel primitives. You can name a moment in execution, return to it, and change something that has already happened. It feels less like a library and more like a debugger that has been given constitutional authority.

Using it is oddly satisfying, though in a rather rule-bound way. It feels like being handed a time machine by someone who has read every time-travel story ever written and trusted none of the protagonists. You can go back. You can edit the past. But if you try to change something in a way that contradicts a decision made downstream, the runtime stops you immediately.

In Deutsch’s terms, Mariposa solves the CTC problem by refusing to allow inconsistent loops to exist at all. The consistency condition is enforced at the moment of mutation. If the past and the future cannot agree, the program terminates rather than negotiate.

This is a principled position, if your principles include “panic the moment the timeline starts acting up.” It keeps the world coherent. What it does not do is help you discover what the coherent answer would have been. It knows when the loop has misbehaved. It is much less interested in helping you rehabilitate it.

Tardis: Elegant Knot-Tying in Haskell

Tardis takes a much more restrained approach. It is a Haskell library in which state can travel both forwards and backwards through a computation at once. Values from later in the program can be available earlier; values from earlier can flow later.

At its best, using it feels beautiful. Not “nice,” not merely “clever,” but genuinely beautiful in the infuriating way that certain Haskell ideas are beautiful: they make something difficult look as if it ought always to have been obvious. Algorithms that would normally require two passes, or an explicit fixpoint combinator, or a slightly embarrassing mutable accumulator, can collapse into one neat traversal.

But Tardis is not really about conflict. It is about elegant self-reference. The forward-travelling state and backward-travelling state are not there to argue with one another. They are there to meet cleanly in the middle, and the programmer is expected to arrange the meeting.

In Deutsch’s framing, Tardis assumes the consistency condition has effectively already been satisfied. It does not search for the fixed point so much as let you write one down in a very graceful way. If you have identified the right structure, it sings. If you have not, it may diverge or produce bottom and then stare at you with the serene expression of a library that feels this was really your responsibility.

So Tardis does not solve the paradox for you. It assumes you have already persuaded the loop to behave, and rewards you with lovely notation for describing the result.

Probabilistic Languages: Consistency as a Matter of Probability

Probabilistic programming languages such as Church, WebPPL, Pyro, and Infer.NET, approach the same problem from an entirely different angle.

Working in this world feels less like programming in the ordinary sense and more like formalising a hunch. You describe a generative process. You specify observations. The system then asks which hidden states, which possible pasts, would make those observations plausible, and with what weight.

This turns Deutsch’s consistency condition into something Bayesian. Contradictory states do not throw errors. They simply receive probability zero and vanish from the posterior. States that are improbable but coherent remain, weighted according to how well they explain the evidence. The future constrains the past, not by literally rewriting it, but by ruling out the versions of it that no longer make sense.

There is something calm about this. These systems do not mind uncertainty. They are built for it. But they are also quite firm in their own way. A world that cannot be made consistent is not explored further. It is discarded with statistical politeness.

So probabilistic systems do find fixed points, in a sense. But they do so by weighting histories, not by letting contradictions persist as first-class outcomes. They are not saying, “perhaps both answers survive.” They are saying, “some answers survive, and the nonsense has been quietly removed from the sample.”

Which is very tidy. The universe, one suspects, is not always so tidy.

Constraint Systems and Solvers: Consistency as a Search Problem

If the previous approaches feel like refusal, elegance, and statistical filtering, constraint systems feel like patient siege warfare.

Constraint programming, logic engines like miniKanren, truth maintenance systems, and propagator networks all treat the problem as one of mutual compatibility. You do not tell the system exactly how to compute the answer. You specify the relationships that must hold, and let the machinery work out which assignments satisfy them all at once

Propagator networks are especially good at making this feel tangible. You define cells. You define constraints between them. You inject a value somewhere and watch information seep across the network until no further inferences can be made. Add conflicting information later, and you discover which assumptions can no longer all be true together.

In Deutsch’s framing, this is fixed-point computation in a very direct sense. The loop between values and constraints is iterated until it stabilises. If it stabilises on a satisfying assignment, excellent. If it does not, then the system reports unsatisfiability and carries on with the faint air of a clerk informing you that your forms are incompatible.

That is both the strength and the limitation. Constraint systems are systematic, rigorous, and often extremely powerful. But they are not especially interested in the drama of contradiction. They search for satisfying worlds. If no such world exists, that fact is reported, not inhabited.

They do not ask what it would mean for several self-consistent resolutions to coexist as data. They ask which assignments work, and if the answer is none, the universe files the complaint and the solver closes the case.

Reversible Languages: Consistency by Construction

Reversible languages such as Janus and Sparcl come at the problem from a different philosophical direction again.

Their aim is not to tolerate contradictions, or to infer likely histories, or to search over possibilities. Their aim is to make computation invertible, so that every step can be run backwards as well as forwards. You are not allowed to throw information away. Every transformation must retain enough structure to be undone.

Using a reversible language feels constraining in a way that is ultimately clarifying. It is a bit like being forced to write with conservation laws hanging over your shoulder. You cannot take shortcuts by erasing intermediate detail. You cannot casually crush many possible inputs into one convenient output and hope nobody notices. The language notices.

In one sense, this resolves the CTC problem beautifully. If every step is invertible, then forward and backward traversals are guaranteed to line up. The timeline behaves because it has been built from components that are not allowed to misplace information.

But this also means reversible systems sidestep the most interesting part of the paradox. They avoid the situation in which past and future might genuinely disagree. They do not so much solve inconsistency as build a world in which inconsistency is difficult to express.

That is admirable. It is also a little evasive. Reversible languages are like people who deal with conflict by becoming so impeccably organised that conflict never gets a chance to sit down.

Quantum Simulators: Consistency as the Substrate

Then things get properly strange.

Tools like Tower and Q-Sylvan are not just another programming trick for managing self-reference. They are working in a different medium. Interacting with a quantum decision diagram simulator does not feel like writing ordinary programs at all. It feels more like manipulating mathematical objects that happen, inconveniently, to correspond to computations.

Here the superposition is not metaphorical. It is the data. A state that is simultaneously zero and one is not being approximated through iteration or inferred through evidence. It is represented directly. The multiplicity is the substrate.

That makes these tools unusually close to Deutsch’s original setting. He was not talking about awkward software dependencies with a science-fiction gloss. He was talking about quantum systems evolving in the presence of closed timelike curves. In that picture, the fixed point may genuinely be a mixed state over all self-consistent histories.

Quantum simulators can express that cleanly because they are already built to represent mixed states, amplitudes, density matrices, and the rest of the formal machinery. The consistency condition is not being bolted on. It lives in the mathematics from the start.

The cost is obvious. These systems are intellectually demanding in a way the others are not. They are not trying to make the loop feel natural or ergonomic or particularly friendly. They are trying to make it correct. Which is admirable, but it does mean the average developer may look at the output and react as if they have accidentally opened a portal into a graduate seminar.

PositronicVariables: Consistency as a Conversation

After walking through all these other approaches, we end with PositronicVariables, which has a very different sort of personality.

It feels much closer to ordinary imperative programming than anything else in this list. You write code that looks broadly like normal code. You assign values. You read them. The strange part is that the variables themselves participate in the consistency process. Execution is effectively allowed to revisit itself across multiple passes until the values settle into a fixed point, or fail to do so.

Most of that can remain invisible while you are writing. You are not required to turn your program into a theorem prover, a probability model, or a piece of quantum formalism. The loop is there, but it is expressed in the same language as the rest of the code.

What becomes genuinely unusual is what happens when the loop stabilises on more than one answer.

Mariposa would stop the program and declare the timeline invalid. Tardis would assume you should never have tied that knot that way. Probabilistic systems would assign one of the options zero weight and move on. Constraint systems would ask whether the solutions are admissible but would not usually package plurality up as a value in this style. Reversible systems would avoid the situation. Quantum simulators could certainly represent it, but in a formal language most developers do not casually print to the console.

PositronicVariables, by contrast, is willing to say: the loop settled on any(1, 2).

On paper, that sounds almost unserious. A variable that can simply be “either this or that”? It can sound like the system is shrugging. But that is exactly what makes it interesting. It is not shrugging. It is reporting the result of the consistency process as data, without converting it into an exception, a discarded branch, a weighted distribution, or an object that requires specialist mathematical literacy to interpret.

This is the closest any of these systems comes to taking Deutsch at his word. If no single consistent state exists, but several individually self-consistent states do, then the outcome is not failure. It is plurality.

PositronicVariables does not require the programmer to pre-prove consistency. It does not search externally for a satisfying assignment. It does not hide disagreement behind inference. It runs the loop, lets the values negotiate, and reports what survived, even if what survived was two things at once.

That is not just a neat implementation detail. It is a very specific answer to the paradox.

Why This Framing Matters

Seen through Deutsch’s lens, these systems stop looking like unrelated curiosities and start looking like different philosophies of the same underlying problem.

They are all dealing, in one form or another, with feedback that is not merely sequential. A value depends on the very context it helps create. Cause and effect stop being a line and become a loop. Once that happens, the real question is no longer whether you have a paradox. The question is what sort of system you have built to cope with one.

Some systems insist the loop must already be well-behaved before execution begins. Some search for a satisfying assignment. Some weigh histories by plausibility and discard the impossible. Some enforce reversibility so that disagreement never properly arises. Some represent multiplicity natively in a formal substrate built for it.

And one of them does something slightly bolder. It lets the loop run, watches what stabilises, and tells you the answer plainly, even when the plain answer is not singular.

That is why PositronicVariables feels unexpectedly faithful to Deutsch’s original idea. Deutsch was not trying to explain away the paradox. He was trying to describe what a system does when it encounters one and is not allowed to cheat. His answer was that the system finds the states that are self-consistent and keeps those.

It turns out software can do that too.

It just needed a library willing to let the timeline misbehave a little before deciding what, in the end, it could live with.